Chapter 1

The Barber Who Cannot Shave Himself

Imagine a small town with exactly one barber. The barber shaves all those – and only those – who do not shave themselves. Simple enough rule. Now the question: who shaves the barber?

If he shaves himself, then he belongs to the group of people who shave themselves – and therefore should not be shaved by the barber. But he is the barber. If he does not shave himself, then he belongs to the group of people who do not shave themselves – and therefore must be shaved by the barber. But again, he is the barber. Either way, contradiction.

This is not a logic puzzle with a clever trick answer. There is no resolution. The barber cannot exist under these rules. And this seemingly innocent little story shook the foundations of mathematics in the early twentieth century.

The Liar Who Tells the Truth About Lying

The barber paradox is a modern retelling of a far older puzzle. Around 600 BCE, the Cretan philosopher Epimenides reportedly declared: “All Cretans are liars.” If Epimenides is telling the truth, then as a Cretan he is a liar – so the statement must be false. If it is false, then not all Cretans are liars – which is consistent but uninformative. The strict version sharpens the paradox to a single sentence:

“This sentence is false.”

If the sentence is true, then what it says holds – it is false. If it is false, then what it says does not hold – so it is true. The sentence oscillates between true and false without ever settling. It is self-referential: it talks about itself. And that self-reference is the source of the paradox.

For millennia, philosophers treated the Liar as a curiosity – an amusing puzzle for dinner parties, not a serious problem. That changed in 1901.

Frege’s Life’s Work in Ruins

Gottlob Frege had spent decades building the Grundgesetze der Arithmetik – an ambitious attempt to derive all of arithmetic from pure logic. Two volumes, hundreds of pages of meticulous formal deductions. In 1902, just as the second volume was going to press, Frege received a letter from a young British logician named Bertrand Russell.

Russell had found a contradiction at the heart of Frege’s system. Consider the set of all sets that do not contain themselves:

Does \(R\) contain itself? If \(R \in R\), then by definition \(R \notin R\). If \(R \notin R\), then by definition \(R \in R\). Contradiction. This is Russell’s paradox – the barber story in set-theoretic clothing.

Frege’s system allowed unrestricted set formation: for any property \(P(x)\), the set \(\{x \mid P(x)\}\) was assumed to exist. Russell’s paradox showed that this principle – called naïve comprehension – is inconsistent. Not all properties define sets.

Frege responded with devastating honesty. He added an appendix to the second volume: “Hardly anything more unfortunate can befall a scientific writer than to have one of the foundations of his edifice shaken after the work is finished. This was the position I was placed in by a letter of Mr. Bertrand Russell.”

The Escape Routes

Mathematics needed repair. Several proposals emerged:

Russell’s type theory (1908): Sets are arranged in a hierarchy of “types.” A set of type \(n\) can only contain elements of type \(n-1\). The set of all sets that don’t contain themselves is simply ill-formed – you cannot ask whether a set contains itself, because a set and its elements belong to different types. Effective but cumbersome.

Zermelo–Fraenkel set theory (ZF, 1908–1922): Instead of naïve comprehension, a carefully chosen list of axioms controls which sets can be formed. The axiom of separation allows only subsets of existing sets, preventing the formation of \(R\). This became the standard foundation, usually augmented with the axiom of choice (ZFC).

Hilbert’s program (1920s): David Hilbert proposed a more ambitious solution. Don’t just patch the foundations – prove that mathematics is consistent. Find a formal system powerful enough to express all of mathematics, and then demonstrate, using only the simplest and most reliable methods, that no contradiction can ever arise within it.

Hilbert’s vision was grand: completeness (every true statement is provable), consistency (no contradictions), and decidability (an algorithm that determines the truth of any statement). He genuinely believed this was achievable. In 1930, he declared at a conference in Königsberg: “Wir müssen wissen. Wir werden wissen.” – “We must know. We will know.”

The day before that speech, in the same city, a quiet 24-year-old Austrian mathematician named Kurt Gödel had presented a result that destroyed the program entirely.

Status: Self-reference creates paradoxes. Russell’s paradox shattered naïve set theory. Hilbert proposed to rescue mathematics by proving its own consistency. Gödel was about to show that this is impossible – but first, we need a tool from Georg Cantor.

Chapter 2

Cantor’s Diagonal Argument

In the 1870s, Georg Cantor asked a question that seemed almost absurd: are there different sizes of infinity? Common sense says no – infinity is infinity. Cantor proved that common sense is wrong.

Countable Infinity

A set is countably infinite if its elements can be listed in a sequence – first element, second element, third element, and so on, eventually reaching every element. The natural numbers \(\mathbb{N} = \{0, 1, 2, 3, \ldots\}\) are the prototype.

Surprising fact: the integers \(\mathbb{Z}\) are also countable, even though they extend in both directions. Just interleave positives and negatives: \(0, 1, -1, 2, -2, 3, -3, \ldots\). Every integer appears exactly once.

Even more surprising: the rational numbers \(\mathbb{Q}\) are countable. Cantor showed this with a beautiful zigzag argument over a two-dimensional grid of fractions. Despite being dense – between any two rationals there are infinitely many others – they can still be listed.

The Diagonal Proof

Now Cantor asked: are the real numbers also countable? His proof that they are not is one of the most elegant arguments in all of mathematics.

Suppose, for contradiction, that we could list all real numbers between 0 and 1. Write them out in decimal:

| \(r_1\) = | 0. | 5 | 1 | 0 | 3 | 7 | … |

| \(r_2\) = | 0. | 4 | 1 | 3 | 9 | 2 | … |

| \(r_3\) = | 0. | 8 | 2 | 7 | 1 | 8 | … |

| \(r_4\) = | 0. | 3 | 5 | 0 | 6 | 4 | … |

| \(r_5\) = | 0. | 9 | 7 | 3 | 8 | 5 | … |

| ⋮ | |||||||

Now construct a new number \(d\) by reading down the diagonal – the first digit of \(r_1\), the second digit of \(r_2\), the third digit of \(r_3\), and so on – and then changing every digit. For example, replace each digit \(a\) with \(a + 1 \pmod{9}\) (wrapping 9 to 0):

The number \(d\) differs from every number on the list. It differs from \(r_1\) in the first decimal place. From \(r_2\) in the second. From \(r_n\) in the \(n\)-th. So \(d\) is not on the list – contradicting our assumption that the list contained all real numbers.

Therefore the real numbers are uncountable. There are strictly more real numbers than natural numbers. Infinity comes in sizes, and the infinity of the reals is larger than the infinity of the naturals.

The Power of Diagonalization

Cantor published his diagonal argument in 1891. It looks like a curiosity about set theory, but the method turned out to be one of the most powerful proof techniques in all of mathematics and computer science. The pattern is always the same:

1. Assume a complete listing of some collection.

2. Use the listing itself to construct an object that cannot be on the list.

3. Contradiction.

We will see this same pattern in Gödel’s proof (Chapter 3) and Turing’s proof (Chapter 5). The diagonal argument is the common ancestor of the deepest impossibility results in logic and computation.

Try it yourself

Enter any five real numbers (as decimals). The visualization builds the diagonal in real time and shows you the constructed number that escapes the list.

Cantor’s Legacy

Cantor’s work was fiercely resisted. Leopold Kronecker called him a “corrupter of youth.” Henri Poincaré called set theory a “disease.” Cantor suffered from recurring bouts of depression and spent his final years in a sanatorium. He died in 1918 without seeing his ideas accepted by the mainstream.

Today, his diagonal argument is taught in every first-year logic course, and his set theory is the foundation on which virtually all of modern mathematics is built. The irony is deep: the man who was attacked for his ideas about infinity produced a proof technique so powerful that it exposed the limits of mathematics itself.

Status: Cantor showed that the real numbers are uncountable using a diagonal argument: assume a complete list, use the list to construct something that cannot be on the list, reach a contradiction. This technique – diagonalization – is the engine behind the deepest impossibility results of the twentieth century. Gödel will use it next.

Chapter 3

Gödel’s Masterstroke

It is 1930. David Hilbert, the most influential mathematician alive, is confident that the foundations crisis is nearly resolved. His program calls for a formal system that captures all of mathematics and a finitary proof that the system is consistent. Several of his colleagues are working on the details.

Kurt Gödel, twenty-four years old, has just completed his doctorate in Vienna. His thesis proved that first-order logic is complete – every logical truth has a proof. A triumph for Hilbert’s program. But then Gödel turned to a deeper question: is arithmetic also complete? Can every true statement about natural numbers be proved?

Hilbert’s Three Demands

Hilbert wanted a formal system \(F\) satisfying three properties:

Consistency: \(F\) never proves both \(\varphi\) and \(\neg\varphi\). No contradictions.

Completeness: For every statement \(\varphi\) in the language of \(F\), either \(\varphi\) or \(\neg\varphi\) is provable. No undecidable questions.

Decidability: There exists a mechanical procedure (what we would now call an algorithm) that, given any statement \(\varphi\), determines whether \(\varphi\) is provable in \(F\).

Gödel shattered the first two demands. Turing would later shatter the third.

Making Arithmetic Talk About Itself

Gödel’s key insight was breathtaking in its audacity: he made arithmetic talk about itself. The Liar paradox says “this sentence is false.” Gödel constructed an arithmetic statement that says “this statement is not provable.”

But how can a statement about numbers refer to itself? Numbers don’t know about proofs. Gödel’s trick: assign a unique natural number to every symbol, every formula, and every proof in the formal system. This encoding is called Gödel numbering.

The details are intricate but the idea is simple. Every symbol gets a number:

| Symbol | 0 | S | + | × | = | ( | ) | ¬ | ∀ |

|---|---|---|---|---|---|---|---|---|---|

| Code | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 |

A formula like \(0 = 0\) becomes the sequence \(\langle 1, 5, 1 \rangle\), which gets encoded as a single number via prime factorization: \(2^1 \cdot 3^5 \cdot 5^1 = 2 \cdot 243 \cdot 5 = 2430\). A proof is a sequence of formulas, which becomes a sequence of Gödel numbers, which becomes a single (very large) number.

The crucial observation: the property “\(n\) is the Gödel number of a provable formula” is an arithmetic property – it can be expressed using only the basic operations of addition, multiplication, and quantifiers over natural numbers. Proofs about proofs become statements about numbers.

Try it yourself

Type a simple formula and watch it get encoded into a Gödel number step by step. Then see how the number encodes back into the original formula.

The Gödel Sentence

Using his numbering, Gödel constructed a formula \(G\) with the following property:

\(G\) says: “The formula with Gödel number \(\ulcorner G \urcorner\) is not provable in \(F\).”

In other words, \(G\) says “I am not provable.”

Now the diagonal argument bites. Is \(G\) true or false?

Case 1: Suppose \(G\) is provable. Then \(F\) proves \(G\). But \(G\) says “I am not provable.” So if \(G\) is provable, it is also false. A consistent system does not prove false statements. So if \(F\) is consistent, \(G\) cannot be provable.

Case 2: So \(G\) is not provable. But that is precisely what \(G\) asserts! So \(G\) is true but unprovable.

This is the First Incompleteness Theorem (1931):

Gödel’s First Incompleteness Theorem: Any consistent formal system \(F\) that is powerful enough to express basic arithmetic contains statements that are true but not provable in \(F\). In other words, \(F\) is incomplete.

Note the careful conditions: the system must be consistent (no contradictions) and sufficiently powerful (able to express arithmetic – roughly, Peano arithmetic or anything stronger). Trivial systems or very weak logics can escape, but any system capable of doing real mathematics cannot.

Can’t We Just Add \(G\) as an Axiom?

A natural reaction: if \(G\) is true but unprovable, just add \(G\) as a new axiom! Call the extended system \(F'\). Problem solved?

No. Gödel’s construction works for any sufficiently powerful consistent system. So \(F'\) has its own Gödel sentence \(G'\), which is true but unprovable in \(F'\). Add \(G'\), get \(F''\), which has \(G''\), and so on. The incompleteness is inescapable. You can never patch it away.

This is not a bug in arithmetic. It is a structural feature of any formal system that can talk about itself.

The Connection to the Liar

Gödel himself acknowledged the parallel. The Liar sentence says “I am false.” Gödel’s sentence says “I am not provable.” The Liar leads to a paradox because truth and falsehood are exhaustive – a sentence must be one or the other. Gödel’s sentence avoids paradox because provability and truth are not the same thing. A sentence can be true without being provable. Gödel showed that such sentences must exist.

The Liar paradox is a bug. The Gödel sentence is a theorem.

Status: Gödel used a diagonal argument to construct a sentence that says “I am not provable.” If the system is consistent, this sentence is true but unprovable. Arithmetic – and any system powerful enough to express it – is necessarily incomplete. But there is worse news.

Chapter 4

The Second Blow

The first incompleteness theorem says that arithmetic is incomplete – there are truths it cannot prove. Painful, but perhaps tolerable. After all, Hilbert’s primary concern was consistency. Even if some exotic statements slip through, surely we can at least prove that the system doesn’t contradict itself?

Gödel’s second theorem closes this escape route.

The Second Incompleteness Theorem

The statement “\(F\) is consistent” can itself be expressed as an arithmetic sentence. Consistency means: there is no number \(n\) that encodes a proof of \(0 = 1\) (or any other contradiction). In Gödel’s encoding, this becomes:

Gödel proved that if \(F\) is consistent, then \(F\) cannot prove \(\text{Con}(F)\).

Gödel’s Second Incompleteness Theorem: No consistent formal system \(F\) that is powerful enough to express basic arithmetic can prove its own consistency.

The intuition is elegant: the first theorem showed that \(G\) (“I am not provable”) is true if and only if \(F\) is consistent. So proving consistency is at least as hard as proving \(G\). But \(G\) is unprovable. Therefore consistency is also unprovable.

Hilbert’s Program: Dead on Arrival

Recall Hilbert’s three demands:

| Demand | Status | Killed by |

|---|---|---|

| Completeness | Impossible | Gödel’s 1st theorem (1931) |

| Provable consistency | Impossible | Gödel’s 2nd theorem (1931) |

| Decidability | Impossible | Church & Turing (1936) |

Hilbert’s program was not merely difficult – it was mathematically impossible. Not because mathematicians weren’t clever enough, but because the goals themselves were contradictory for any sufficiently powerful system.

A Subtle but Important Point

Gödel’s second theorem says that \(F\) cannot prove its own consistency. It does not say that consistency cannot be proved at all. Gerhard Gentzen proved the consistency of Peano arithmetic in 1936 – but he had to use transfinite induction up to the ordinal \(\varepsilon_0\), which goes beyond what Peano arithmetic itself can justify. You can always prove consistency from outside, using a stronger system – but that merely shifts the problem. Who proves the consistency of the stronger system?

It is turtles all the way down.

What Does It Mean?

Gödel’s theorems do not say that mathematics is unreliable, that truth doesn’t exist, or that “anything goes.” They say something far more precise and far more interesting:

Truth exceeds provability. In any formal system rich enough to do arithmetic, there are truths that the system recognizes as meaningful but cannot derive from its axioms. Mathematics is too vast to be captured by any single set of rules.

Some have drawn philosophical conclusions from this – that human mathematical intuition must transcend mechanical computation (the “Lucas–Penrose argument”). Others reject this interpretation. The debate continues. What is beyond debate is the mathematical result itself: completeness and self-proved consistency are unattainable.

Status: Gödel’s second theorem killed Hilbert’s dream. No sufficiently powerful system can prove its own consistency. The third leg of Hilbert’s program – decidability – would fall five years later, by a completely different route: computation.

Chapter 5

Turing’s Halting Problem

In 1928, Hilbert posed the Entscheidungsproblem – the “decision problem”: is there a general algorithm that can determine the truth or falsehood of any mathematical statement?

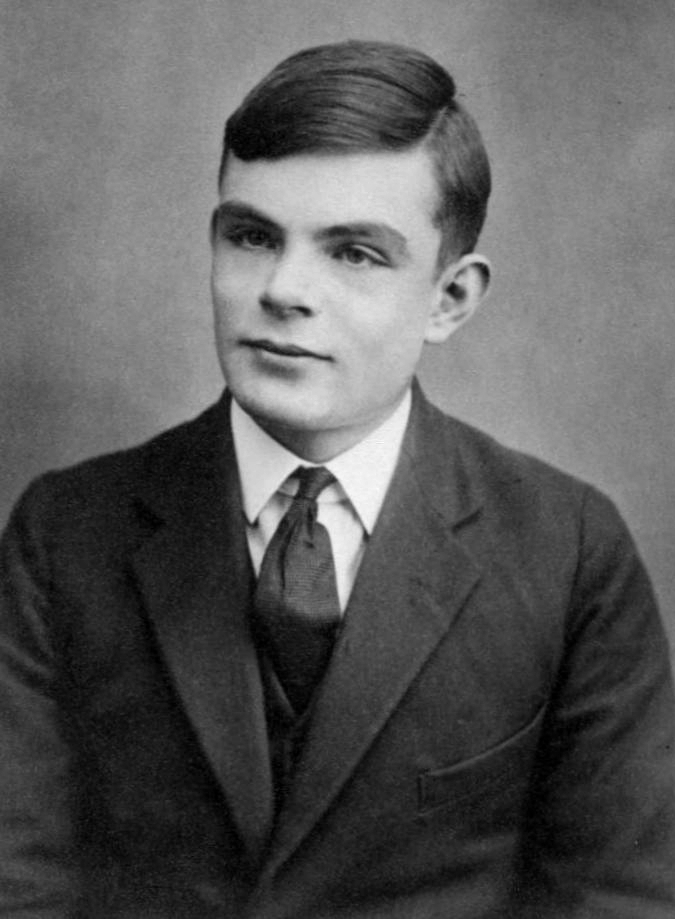

To answer this, you first need a precise definition of “algorithm.” In 1936, Alan Turing provided one.

The Turing Machine

A Turing machine is the simplest possible model of a computer. It consists of:

1. An infinite tape divided into cells, each containing a symbol.

2. A read/write head that moves one cell at a time, left or right.

3. A finite set of states and a transition table: given the current state and the symbol under the head, the machine writes a new symbol, moves the head, and transitions to a new state.

4. A special halt state: when the machine enters it, computation stops.

The Church–Turing thesis (not a theorem, but a widely accepted claim) says: anything that is “effectively computable” can be computed by a Turing machine. Every programming language, every supercomputer, every quantum computer – they are all equivalent to a Turing machine in terms of what they can compute. They may differ in speed, but not in capability.

The Universal Turing Machine

A crucial insight: a Turing machine’s transition table can itself be encoded as a string of symbols on a tape. So there exists a universal Turing machine \(U\) that takes as input the description of any other Turing machine \(M\) and an input \(x\), and simulates what \(M\) would do on \(x\).

This is the theoretical foundation of the stored-program computer. The machine and its instructions are both just data. John von Neumann would later build exactly this architecture in hardware.

The Halting Problem

Now Turing asked the question that would kill the Entscheidungsproblem. Consider the following problem:

Halting Problem: Given a Turing machine \(M\) and an input \(x\), does \(M\) eventually halt on \(x\), or does it run forever?

Turing proved that no algorithm can solve this problem in general. The proof is a diagonal argument – the same technique Cantor used, now applied to computation.

The Proof

Suppose, for contradiction, that there exists a Turing machine \(H\) that solves the halting problem. That is, for any machine \(M\) and input \(x\):

Now construct a new machine \(D\) (for “diagonal”) that takes a machine description \(\langle M \rangle\) as input and does the following:

1. Run \(H(\langle M \rangle, \langle M \rangle)\) – ask whether \(M\) halts when given its own description as input.

2. If \(H\) says “halts,” then \(D\) loops forever.

3. If \(H\) says “loops forever,” then \(D\) halts.

In other words, \(D\) does the opposite of what \(H\) predicts.

Now ask: what happens when we run \(D\) on its own description, \(D(\langle D \rangle)\)?

Case 1: Suppose \(D(\langle D \rangle)\) halts. Then \(H(\langle D \rangle, \langle D \rangle) = 1\). But then, by construction, \(D\) loops forever. Contradiction.

Case 2: Suppose \(D(\langle D \rangle)\) loops forever. Then \(H(\langle D \rangle, \langle D \rangle) = 0\). But then, by construction, \(D\) halts. Contradiction.

Both cases lead to contradiction. Therefore \(H\) cannot exist. The halting problem is undecidable.

Try it yourself

Step through the halting-problem proof interactively. See how the diagonal machine D is constructed and why feeding it its own description leads to a contradiction.

The Connection to Cantor

The structure is identical to Cantor’s diagonal argument:

| Step | Cantor | Turing |

|---|---|---|

| Assume | All reals can be listed | A halting oracle \(H\) exists |

| Construct | New real via diagonal + flip | Machine \(D\) via \(H\) + opposite |

| Self-apply | Check if \(d\) is on the list | Run \(D(\langle D \rangle)\) |

| Contradiction | \(d\) differs from every entry | \(D\) halts iff it doesn’t |

The diagonal argument is the secret weapon. Cantor uses it against set theory. Gödel uses it (via self-reference) against formal provability. Turing uses it against computation. Three different domains, one technique, one conclusion: some things are beyond reach.

The Death of the Entscheidungsproblem

Turing and Alonzo Church (using his lambda calculus) independently proved in 1936 that the Entscheidungsproblem has no solution. There is no general algorithm that decides all mathematical statements. The last pillar of Hilbert’s program had fallen.

But Turing’s paper, “On Computable Numbers, with an Application to the Entscheidungsproblem,” achieved something far beyond answering Hilbert’s question. By defining computation precisely, Turing laid the intellectual foundation for the digital computer. The impossibility result was a byproduct of creating the most important concept in twentieth-century technology.

Status: The halting problem is undecidable: no algorithm can determine whether an arbitrary program terminates. The proof uses the same diagonal technique as Cantor. Combined with Gödel’s theorems, it buries Hilbert’s program completely: mathematics is incomplete, its consistency unprovable from within, and there is no algorithm that settles all questions. But these impossibilities organize themselves into a beautiful hierarchy.

Chapter 6

The Hierarchy of Computability

Not all problems are created equal. Some are solvable. Some are solvable but take an obscenely long time. Some are outright unsolvable. The twentieth century produced a remarkably detailed map of these distinctions.

The Chomsky Hierarchy

In the 1950s, Noam Chomsky – working on the structure of natural language – classified formal grammars into a hierarchy of increasing power. Each level corresponds to a class of automata:

| Type | Grammar | Automaton | Example |

|---|---|---|---|

| 3 | Regular | Finite automaton | Email validation, regex |

| 2 | Context-free | Pushdown automaton | HTML parsing, balanced brackets |

| 1 | Context-sensitive | Linear bounded automaton | Natural language syntax |

| 0 | Recursively enumerable | Turing machine | Any computable function |

Each level is strictly more powerful than the one below. A finite automaton cannot check whether parentheses are balanced. A pushdown automaton cannot do arbitrary computation. Only a Turing machine (or equivalent) reaches the full power of computation – and even that power has limits, as we saw with the halting problem.

P vs NP: The Millennium Problem

Even among problems that are solvable, there is a dramatic hierarchy of difficulty. The most famous open question in computer science divides solvable problems into two classes:

P (Polynomial time): Problems where a solution can be found in polynomial time. Sorting a list. Finding the shortest path in a graph. Multiplying two numbers. These are “efficiently solvable.”

NP (Nondeterministic Polynomial time): Problems where a solution can be verified in polynomial time, even if finding it might take much longer. Given a proposed coloring of a map with four colors, it’s easy to check. But finding such a coloring might require exploring exponentially many possibilities.

The question P = NP? asks: is every problem whose solution can be efficiently verified also one whose solution can be efficiently found? Most computer scientists believe the answer is no, but after more than fifty years, no one has been able to prove it. The Clay Mathematics Institute offers one million dollars for a proof either way.

If P = NP, then every cryptographic system we rely on could be broken. Every optimization problem in logistics, biology, and engineering would become tractable. It would be the most consequential mathematical result in history.

If P ≠ NP (as most believe), then there are problems that are easy to check but inherently hard to solve. Creativity – finding a solution – is genuinely harder than verification – checking one. This resonates far beyond mathematics.

Beyond the Computable: The Arithmetical Hierarchy

Above the boundary of computability, there is further structure. The arithmetical hierarchy classifies undecidable problems by their degree of unsolvability:

\(\Sigma_1^0\) – the halting problem lives here. Problems of the form “there exists an \(n\) such that …”

\(\Pi_1^0\) – the complement of the halting problem. “For all \(n\), …”

\(\Sigma_2^0\) – “there exists an \(n\) such that for all \(m\), …”

And so on, with ever more alternations of quantifiers. Each level is strictly harder than the one below. The hierarchy continues through the hyperarithmetical sets, the analytical hierarchy, and beyond – into a vast landscape of undecidability that dwarfs the computable world.

Status: Computability and complexity form a layered hierarchy. The Chomsky hierarchy classifies languages by the power of the automata needed to recognize them. P vs NP stratifies solvable problems by difficulty. The arithmetical hierarchy stratifies unsolvable problems. At every level, there are problems that cannot be reduced to a lower level. The limits are structural, not accidental.

Chapter 7

Self-Reference Everywhere

We have now seen the same pattern – self-reference leading to impossibility or paradox – in four different domains. Let us make the connections explicit.

The Connection Table

| Domain | Self-referential object | Result | Year |

|---|---|---|---|

| Set theory | Set of all non-self-containing sets | Russell’s paradox | 1901 |

| Set theory | Diagonal of a supposed listing | Uncountability of \(\mathbb{R}\) | 1891 |

| Arithmetic | “I am not provable” | Incompleteness | 1931 |

| Computation | Program that analyzes itself | Undecidability of halting | 1936 |

| Semantics | “This sentence is false” | Liar paradox | ~600 BCE |

The common thread: a system becomes powerful enough to describe itself, and in that moment of self-reflection, it encounters a boundary it cannot cross.

Quines: Programs That Print Themselves

Self-reference is not always destructive. A quine is a program that outputs its own source code. No input, no file reading – the program generates itself from scratch.

Here is a quine in Python:

s = 's = %r\nprint(s %% s)'

print(s % s)The string s contains a template of the entire program, with %r as a placeholder for itself. The print statement fills the placeholder with s, producing the exact source code. Self-reference without paradox – the program does not ask whether it halts or whether it is true; it simply reproduces.

Quines exist in every Turing-complete language. This is guaranteed by Kleene’s recursion theorem (also called the fixed-point theorem): for any computable transformation \(f\), there exists a program \(e\) such that running \(e\) produces the same result as running \(f\) on \(e\)’s own description. Self-reference is not a bug – it is a theorem.

DNA: The Original Quine

The parallel to biology is irresistible. A cell’s DNA encodes the instructions for building the molecular machinery (ribosomes, polymerases) that reads and copies the DNA. The DNA is both the program and the data that the program operates on.

John von Neumann recognized this structure in the 1940s – before the discovery of DNA’s double helix. He proved mathematically that a self-reproducing automaton must contain a universal constructor (the ribosome) and a description of itself (the genome). The description must be used in two different ways: once as instructions to be executed, once as data to be copied. This is exactly what DNA does. The theory predicted the biology.

Gödel, Escher, Bach

Douglas Hofstadter’s Gödel, Escher, Bach: An Eternal Golden Braid (1979) is the definitive exploration of self-reference across domains. Hofstadter traces the pattern through:

Gödel: Arithmetic talks about itself via Gödel numbering. Incompleteness follows.

Escher: Hands drawing hands. A gallery that contains a painting of the gallery. Visual self-reference that loops back on itself, creating impossible structures.

Bach: Musical canons and fugues where themes are inverted, reversed, and layered over themselves. The Art of the Fugue ends with a fugue theme that spells B-A-C-H in German musical notation – the composer signing his name inside the music.

Hofstadter’s thesis: self-reference is not merely a source of paradox. It is the mechanism by which meaning arises. A system that can model itself develops something like awareness. Consciousness, he argues, is what happens when a system’s model of the world includes a model of itself – a “strange loop.”

Self-Reference in Modern AI

Large language models exhibit a peculiar form of self-reference. They can discuss their own architecture, generate code that modifies their own behavior (in agent frameworks), and reason about their own reasoning. When a model writes: “I should re-examine my previous answer because …”, it is performing a kind of meta-cognition – computation about computation.

Is this “real” self-reference? The debate mirrors the Lucas–Penrose debate about Gödel and minds. What is clear is that the pattern of self-reference – a system processing its own output – is everywhere, from DNA to Turing machines to neural networks. And wherever it appears, it brings both power and paradox.

Status: Self-reference is a universal pattern. It appears in logic (the Liar), set theory (Russell), arithmetic (Gödel), computation (Turing), biology (DNA), art (Escher), music (Bach), and AI (meta-reasoning). It produces paradoxes when combined with totality claims, but creativity and meaning when harnessed carefully. Quines, DNA, and strange loops are self-reference put to constructive use.

Epilogue

We Must Know. We Will Know?

In Göttingen, Germany, there is a gravestone. It belongs to David Hilbert, who died in 1943. Carved into the stone are six words:

“Wir müssen wissen. Wir werden wissen.”

“We must know. We will know.”

Hilbert spoke these words on September 8, 1930, in Königsberg – the day after Gödel had quietly presented his incompleteness result at the same conference. Hilbert apparently did not attend Gödel’s talk, or did not grasp its implications. He went on to repeat his credo for a radio broadcast that evening. The recording survives.

Was Hilbert wrong? In the strict sense, yes. We cannot know everything within any single formal system. Mathematics will always contain truths beyond the reach of proof. Computation will always face problems beyond the reach of algorithms.

But in a deeper sense, Hilbert’s optimism was not misplaced. Gödel, Turing, and the others did not discover that mathematics is broken. They discovered its shape. The limits are not walls blocking progress; they are the topology of the mathematical landscape. Knowing where the boundaries lie is itself a form of knowledge – perhaps the deepest kind.

The Busy Beaver: Pushing Against the Boundary

The Busy Beaver function \(\text{BB}(n)\) asks: what is the maximum number of 1s that an \(n\)-state Turing machine can write on a blank tape before halting? This function is well-defined but uncomputable – it grows faster than any computable function. It is the most concrete manifestation of the halting problem.

For decades, only the first four values were known: \(\text{BB}(1) = 1\), \(\text{BB}(2) = 4\), \(\text{BB}(3) = 6\), \(\text{BB}(4) = 13\). Then, in July 2024, an international collaboration of mathematicians and programmers known as the Busy Beaver Challenge (bbchallenge.org) proved:

The proof required classifying all 88,664,064 five-state Turing machines – a vast computational effort combined with clever theoretical arguments to handle the most stubborn holdouts. Some machines that seem to run forever were proved to do so; others were shown to eventually halt after writing exactly 47,176,870 ones.

\(\text{BB}(6)\) is already beyond reach. It is known that \(\text{BB}(6) > 10 \uparrow\uparrow 15\) (a power tower of 15 tens). For \(\text{BB}(7918)\), the value is independent of ZFC set theory – it cannot even be expressed within the standard axioms of mathematics. The Busy Beaver function is a concrete staircase leading from the computable into the absolutely unreachable.

The Glass Bead Game Pattern

In this blog, we have now seen five threads of the Glass Bead Game:

Quantum mechanics: \(\hat{H}\psi = E\psi\) — eigenvalues determine the allowed energies

Music: \(X'' = -\lambda X\) — eigenvalues determine the overtone frequencies

AI: \(K\boldsymbol{\alpha} = \lambda\boldsymbol{\alpha}\) — eigenvalues determine what is learned

Emergence: Simple rules → complex behavior — the whole exceeds the parts

Logic: Self-reference → incompleteness — the system cannot fully see itself

The fifth thread is different from the first four. Eigenvalues and emergence are about what is. Incompleteness is about what cannot be. It is a limit theorem – a statement about the structure of knowledge itself.

And yet it connects to the other threads. Emergence shows that simple components produce behaviors that cannot be predicted from the parts alone – a kind of incompleteness of reductionism. Quantum measurement introduces fundamental unpredictability – not from insufficient knowledge, but from the structure of nature. And the eigenvalue problems that govern physics, music, and AI all live within formal systems whose consistency we can use but never fully prove.

Hilbert’s epitaph asks us to know. Gödel’s theorem tells us we cannot know everything. But the very proof that shows us the limit is itself a piece of knowledge – a deep, beautiful, hard-won piece. Perhaps the most profound things we can know are the things we can prove we cannot know.

We must know. We will know – including what we cannot know.

Frequently Asked Questions

What does Gödel’s incompleteness theorem actually say?

Any consistent formal system powerful enough to express basic arithmetic contains true statements that cannot be proved within the system. This means no single set of axioms can capture all mathematical truth. The theorem does not say mathematics is unreliable – it says it is too rich to be fully formalized.

What is the halting problem?

The halting problem asks: given an arbitrary program and an input, will the program eventually stop or run forever? Alan Turing proved in 1936 that no algorithm can answer this question in general. The proof uses a diagonal argument – the same technique Cantor used to show that the real numbers are uncountable.

What is Russell’s paradox?

Russell’s paradox considers the set of all sets that do not contain themselves. If such a set contains itself, it shouldn’t; if it doesn’t, it should. The paradox showed that naïve set theory is inconsistent and led to the development of axiomatic set theories like ZFC.

What is P vs NP?

P is the class of problems solvable in polynomial time. NP is the class of problems whose solutions are verifiable in polynomial time. The P vs NP question asks whether every problem that is easy to check is also easy to solve. Most experts believe P ≠ NP, but no proof exists. It is one of the seven Millennium Prize Problems, with a one-million-dollar bounty.

What is BB(5)?

BB(5) = 47,176,870 is the fifth value of the Busy Beaver function, determined in 2024 by the bbchallenge.org collaboration. BB(n) gives the maximum number of 1s an n-state Turing machine can write before halting. The function grows faster than any computable function and is the most concrete manifestation of the halting problem’s unsolvability.

Read next

Related posts on ki-mathias.de:

- What Quantum Computers Do — Qubit, Bloch sphere, Qiskit

- Why e Is Special — Growth, primes, probability